Overview

Leveraging the foundation built in the prior workshops SPLU-RoboNLP 2019 and SpLU-2018 and focusing on the gaps identified therein, we propose the third workshop on Spatial Language Understanding. One of the essential functions of natural language is to express spatial relationships between objects. Spatial language understanding is useful in many research areas and real-world applications including robotics, navigation, geographic information systems, traffic management, human-machine interaction, query answering and translation systems. Compared to other semantically specialized linguistic tasks, standardizing tasks related to spatial language seem to be more challenging as it is harder to obtain an agreeable set of concepts and relationships and a formal spatial meaning representation that is domain independent and that allows quantitative and qualitative reasoning. This has made research results on spatial language learning and reasoning diverse, task-specific and, to some extent, not comparable. Attempts to arrive at a common set of basic concepts and relationships as well as making existing corpora inter-operable, however, can help avoid duplicated efforts within as well as across fields and instead focus on further developments in the respective fields for automatic learning and reasoning. Existing qualitative and quantitative representation and reasoning models can be used for investigation of interoperability of machine learning and reasoning over spatial semantics. Research endeavors in this area could provide insights into many challenges of language understanding in general. Spatial semantics is also very well-connected and relevant to visualization of natural language and grounding language into perception, central to dealing with configurations in the physical world and motivating a combination of vision and language for richer spatial understanding. In the third round of the SpLU workshop, we will focus on the same major topics as:

- Spatial language meaning representation (continuous, symbolic)

- Spatial language learning

- Spatial language reasoning

- Spatial Language Grounding and Combining vision and language

- Applications of Spatial Language Understanding: QA, dialogue systems, Navigation, etc.

Spatial language meaning representation includes research related to cognitive and linguistically motivated spatial semantic representations, spatial knowledge representation and spatial ontologies, qualitative and quantitative representation models used for formal meaning representation, spatial annotation schemes and efforts for creating specialized corpora. Spatial language learning considers both symbolic and sub-symbolic (with continuous representations) techniques and computational models for spatial information extraction, semantic parsing, spatial co-reference within a global context that includes discourse and pragmatics from data or formal models. For the reasoning aspect, the workshop emphasizes the role of qualitative and quantitative formal representations in helping spatial reasoning based on natural language and the possibility of learning such representations from data; and whether we need these formal representations to support reasoning or there are other alternative ideas. For the multi-modality aspect, answers to questions such as the following will be discussed: (1) Which representations are appropriate for different modalities and which ones are modality independent? (2) How can we exploit visual information for spatial language learning and reasoning? All related applications are welcome, including text to scene conversion, spatial and visual question answering, spatial understanding in multi-modal setting for robotics and navigation tasks and language grounding. The workshop aims to encourage discussions across fields dealing with spatial language along with other modalities. The desired outcome is identification of shared as well as unique challenges, problems and future directions across the fields and various application domains related to spatial language understanding.

The specific topics include but are not limited to:

- Spatial meaning representations, continuous representations, ontologies, annotation schemes, linguistic corpora

- Spatial information extraction from natural language

- Spatial information extraction in robotics, multi-modal environments, navigational instructions

- Text mining for spatial information in GIS systems, geographical knowledge graphs

- Spatial question answering, spatial information for visual question answering

- Quantitative and qualitative reasoning with spatial information

- Spatial reasoning based on natural language or multi-modal information (vision and language)

- Extraction of spatial common sense knowledge

- Visualization of spatial language in 2-D and 3-D

- Spatial natural language generation

- Grounded spatial language and dialog systems

Invited Speakers

- James Pustejovsky, Brandeis University. Abstract. Bio.

- Julia Hockenmaier, University of Illinois at Urbana-Champaign. Abstract. Bio.

- Yoav Artzi, Cornell University. Abstract. Bio.

- Bonnie J. Dorr, Florida Institute for Human and Machine Cognition. Abstract. Bio.

- Douwe Kiela, Facebook. Abstract. Bio.

Schedule (EST)

Please note all the talks will be played on Zoom here.| 8:00-9:00 AM | QA/Poster | Workshop Organizers |

| 9:00-9:10 AM | Opening Talk | Parisa Kordjamshidi |

| 9:10-10:00 AM | Invited Talk | James Pustejovsky |

| 10:00-10:56 AM | Paper Presentations (1,2,3,11) | |

| 10:56-11:05 AM | Break | |

| 11:05-11:55 AM | Invited Talk | Julia Hockenmaier |

| 11:55-12:51 PM | Paper Presentations (4,5,12,13) | |

| 12:51-1:00 PM | Break | |

| 1:00-1:50 PM | Invited Talk | Yoav Artzi |

| 1:50-2:46 PM | Paper Presentations (6,7,8,14) | |

| 2:46-3:45 PM | QA/Poster | Workshop Organizers |

| 3:45-4:35 PM | Invited Talk | Bonnie J. Dorr |

| 4:35-5:31 PM | Paper Presentations (9,10,15,16) | |

| 5:31-5:45 PM | Break | |

| 5:45-6:35 PM | Invited Talk | Douwe Kiela |

| 6:35-7:03 PM | Paper Presentations (17,18) | |

| 7:03-8:00 PM | Panel Discussion | |

| 8:00-9:00 PM | QA/Poster | Workshop Organizers |

Accepted Papers (Proceedings)

- An Element-wise Visual-enhanced BiLSTM-CRF Model for Location Name Recognition. Paper.

Takuya Komada and Takashi Inui - BERT-based Spatial Information Extraction. Paper.

Hyeong Jin Shin, Jeong Yeon Park, Dae Bum Yuk and Jae Sung Lee - A Cognitively Motivated Approach to Spatial Information Extraction. Paper.

Chao Xu, Emmanuelle-Anna Dietz Saldanha, Dagmar Gromann and Beihai Zhou - They are not all alike: answering different spatial questions requires different grounding strategies. Paper.

Alberto Testoni, Claudio Greco, Tobias Bianchi, Mauricio Mazuecos, Agata Marcante, Luciana Benotti and Raffaella Bernardi - Categorisation, Typicality and Object-Specific Features in Spatial Referring Expressions. Paper.

Adam Richard-Bollans, Anthony Cohn and Lucía Gómez Álvarez - A Hybrid Deep Learning Approach for Spatial Trigger Extraction from Radiology Reports. Paper.

Surabhi Datta and Kirk Roberts - Retouchdown: Releasing Touchdown on StreetLearn as a Public Resource for Language Grounding Tasks in Street View. Paper.

Harsh Mehta, Yoav Artzi, Jason Baldridge, Eugene Ie and Piotr Mirowski

Accepted Non-archival Submissions

- SpaRTQA: A Textual Question Answering Benchmark for Spatial Reasoning.

Roshanak Mirzaee, Hossein Rajaby Faghihi and Parisa Kordjamshidi - Geocoding with multi-level loss for spatial language representation.

Sayali Kulkarni, Shailee Jain, Mohammad Javad Hosseini, Jason Baldridge, Eugene Ie and Li Zhang - Vision-and-Language Navigation by Reasoning over Spatial Configurations.

Yue Zhang, Quan Guo and Parisa Kordjamshidi

Accepted Findings Submissions

- Language-Conditioned Feature Pyramids for Visual Selection Tasks. Paper.

Taichi Iki and Akiko Aizawa - A Linguistic Analysis of Visually Grounded Dialogues Based on Spatial Expressions. Paper.

Takuma Udagawa, Takato Yamazaki and Akiko Aizawa - Visually-Grounded Planning without Vision: Language Models Infer Detailed Plans from High-level Instructions. Paper.

Peter A. Jansen - Decoding Language Spatial Relations to 2D Spatial Arrangements. Paper.

Gorjan Radevski, Guillem Collell, Marie-Francine Moens and Tinne Tuytelaars - LiMiT: The Literal Motion in Text Dataset. Paper.

Irene Manotas, Ngoc Phuoc An Vo and Vadim Sheinin - ARRAMON: A Joint Navigation-Assembly Instruction Interpretation Task in Dynamic Environmentsi. Paper.

Hyounghun Kim, Abhay Zala, Graham Burri, Hao Tan and Mohit Bansal - Robust and Interpretable Grounding of Spatial References with Relation Networks. Paper.

Tsung-Yen Yang, Andrew S. Lan and Karthik Narasimhan - RMM: A Recursive Mental Model for Dialogue Navigation. Paper.

Homero Roman Roman, Yonatan Bisk, Jesse Thomason, Asli Celikyilmaz and Jianfeng Gao

Submission Procedure

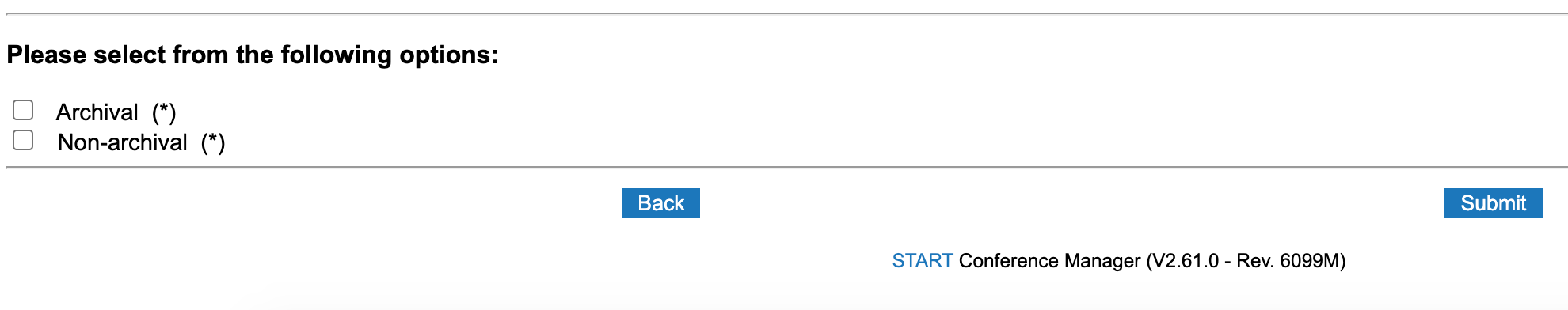

We encourage contributions with technical papers (EMNLP style, 8 pages without references) or shorter papers on position statements describing previously unpublished work or demos (EMNLP style, 4 pages maximum). EMNLP Style files are available [Here]. Please make submissions via Softconf [Here].Non-Archival option: EMNLP workshops are traditionally archival. To allow dual submission of work to SpLU and other conferences/journals, we are also including a non-archival track. Space permitting, these submissions will still participate and present their work in the workshop, will be hosted on the workshop website, but will not be included in the official proceedings. Please submit through softconf but indicate that this is a cross submission at the bottom of the submission form:

Important Dates

- Submission Deadline:

August 15August 21, 2020 - Notification: Oct 1, 2020

- Camera Ready deadline: Oct 12, 2020

- Workshop Day: November 19, 2020

Organizing Committee

| Michigan State University | kordjams@msu.edu | |

| Institute for Human and Machine Cognition | abhatia@ihmc.us | |

| University of Pittsburgh | malihe@pitt.edu | |

| jasonbaldridge@google.com | ||

| UNC Chapel Hill | mbansal@cs.unc.edu | |

| KU Leuven | sien.moens@cs.kuleuven.be |

Program Committee

| The University of Arizona | |

| University of Trento | |

| Örebro University - CoDesign Lab | |

| Carnegie Mellon University | |

| University of Groningen | |

| Microsoft Research | |

| University of Michigan | |

| Simon Fraser University | |

| University of Leeds | |

| KU Leuven | |

| University of Gothenburg | |

| Institute for Human and Machine Cognition | |

| University of Zurich | |

| Universitat Bremen | |

| University of Maryland Baltimore | |

| Institute for Human and Machine Cognition | |

| University of Gothenburg | |

| University of Illinois at Urbana-Champaign | |

| Bytedance | |

| University of Lisbon | |

| Google Inc. | |

| Lionbridge AI | |

| Open University (The Netherlands) | |

| Institute for Human and Machine Cognition | |

| UT Health | |

| Stanford University | |

| Massey University | |

| University of Washington | |

| ARL |